3 Critical AI Policy Gaps

A US federal court ruled in February 2026 that conversations between a securities fraud defendant and an AI chatbot were not protected by attorney-client privilege. The defendant, a former chairman of the bankrupt financial services company GWG Holdings, had used a consumer AI tool to prepare case-related reports for his defence team. He assumed the conversations were confidential. They were not. The judge ordered 31 AI-generated documents disclosed, holding that no AI acceptable use policy or platform terms of service could manufacture the confidentiality that a lawyer-client relationship requires. For AIGP candidates, this ruling is a concise lesson in what happens when an organisation's AI policy governance has operational holes.

What the AIGP Exam Expects of an AI Acceptable Use Policy

The IAPP publishes a Body of Knowledge (BoK) for the AIGP certification, defining every domain and topic the exam covers. Candidates sometimes treat the BoK as an abstract outline. It is not. The BoK makes AI acceptable use policy obligations concrete across several performance indicators, and the exam tests whether you understand them in operational terms.

Section I.C.1 requires candidates to know how to create and implement policies ensuring oversight and accountability across all AI lifecycle stages. That includes acceptable use controls: who may use which AI tools, for what purposes and under what conditions. Section I.C.2 takes it further. Existing privacy, security and intellectual property policies must be evaluated and updated for AI. A pre-AI confidentiality policy that says nothing about AI tool usage is, by the BoK's own standard, incomplete. Candidates who treat policy updates as a one-off exercise rather than a recurring obligation will struggle with scenario questions on this topic.

Section I.C.3 adds third-party risk management. Every consumer AI platform is a third party. If your organisation's AI acceptable use policy does not address how staff interact with external AI services, the policy has a gap the exam will test. The AIGP 2026 curriculum reinforces this system-level perspective, treating third-party AI risk as a governance function rather than a procurement checkbox. The exam does not ask whether policies exist in the abstract; it asks whether they are integrated, updated and enforced across operational contexts (IV.C.1).

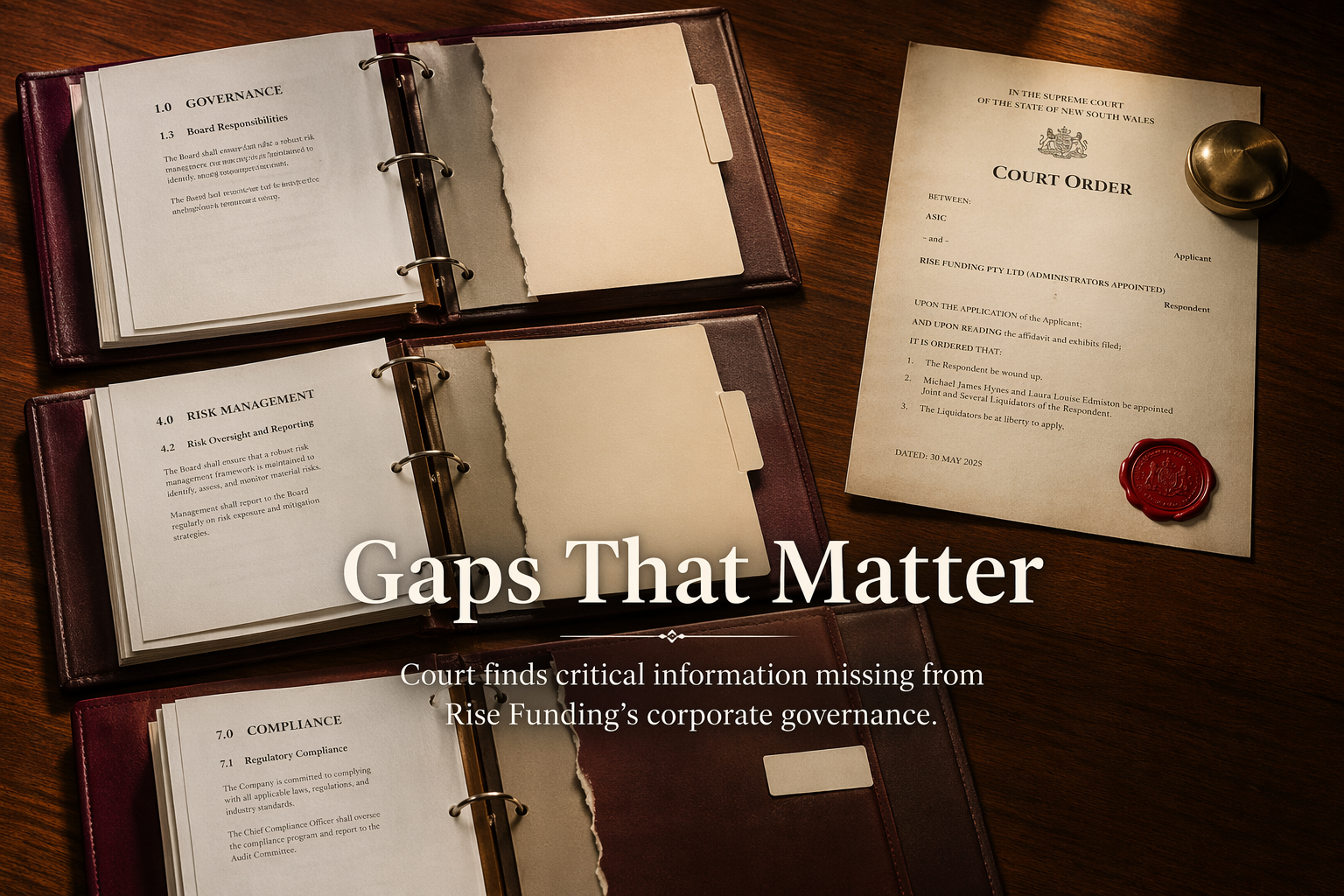

3 AI Policy Gaps the GWG Holdings Ruling Exposes

The court's reasoning maps onto three specific governance failures, each tied to a BoK requirement. If you can identify these gaps in a scenario, you can answer the exam question that follows.

Channel Confidentiality

The court found that the defendant had no reasonable expectation of privacy when using a consumer AI platform. The service's terms explicitly permitted data review and disclosure to government authorities. For the AIGP exam, this maps directly to I.C.3: organisations must assess third-party contracts and acceptable use agreements before permitting AI tools for sensitive work. A well-drafted AI acceptable use policy classifies AI channels by confidentiality tier. Consumer-grade platforms sit in a different tier from enterprise deployments with contractual data-processing agreements. The defendant's organisation apparently made no such distinction; the AI acceptable use policy, if one existed at all, did not match the tool to the risk.

Logging and Retention of Prompts and Outputs

Prosecutors obtained 31 documents the AI tool had generated and retained. Those records existed because the platform stored prompt and output data by default. BoK section III.C.4 requires candidates to understand how organisations manage and document incidents, issues and risks. That obligation extends to AI-generated records. If an organisation cannot specify what AI outputs it retains, how long it keeps them and who can access them, it cannot meet this standard. The AI acceptable use policy must address record-keeping for AI outputs with the same discipline applied to email retention or document management systems. Candidates preparing for the exam should note that the restructured AIGP curriculum separates AI development from AI deployment governance precisely so that record-keeping obligations are not lost in the gap between the two.

Training on Discovery-Aware Use

The defendant apparently never considered that his AI interactions could be compelled in legal proceedings. BoK section IV.C.4 requires documentation of incidents, risks and post-market monitoring plans. Section I.C.1 expects training and awareness programmes across all lifecycle stages. An AI acceptable use policy that exists only on paper, without staff training on discovery risk and litigation hold procedures for AI-generated content, fails both requirements. The exam tests this by asking what an AI governance professional should do first when a gap between written policy and operational practice surfaces. The answer is almost always: train the people using the tools.

What Would the Exam Ask Here?

AIGP scenario questions test whether candidates can connect policy requirements to operational failures. Three plausible question stems drawn from this ruling:

- An employee uses a consumer AI chatbot to summarise privileged legal advice received from external counsel. Which AI acceptable use policy gap does this expose under BoK I.C.3?

- An organisation's AI policy does not specify retention periods for AI-generated outputs. A regulator requests copies of all AI-assisted analyses produced in the past 12 months. Which lifecycle governance obligation is unmet under III.C.4?

- A company discovers that staff have been using a public AI tool for internal strategy work without approval. What should the AI governance professional recommend first under IV.C.1?

Each tests whether the candidate understands that an AI policy must be specific, operational and enforced; not aspirational.

Test Your Own AI Acceptable Use Policy

If your organisation uses AI tools, run three checks. Does the AI acceptable use policy classify AI platforms by confidentiality tier, distinguishing consumer tools from enterprise deployments? Does it specify retention and disclosure rules for AI-generated records, with the same precision applied to other business documents? Has staff training covered what happens when AI outputs become discoverable in litigation, regulatory inquiry or audit? If any answer is uncertain, you have found your next study priority. Explore the AIGP study resources at 22academy.com/study and bring your policy questions to the AIGP study groups on Facebook and LinkedIn.