GPAI Model Obligations: 5 Essential Exam Facts

The EU AI Act’s GPAI model obligations have been live since August 2025. Full enforcement powers; including fines of up to 3% of global turnover; start on 2 August 2026. That is five months away. Meanwhile, only eight of twenty-seven Member States have communicated their single points of contact to the European Commission. The governance infrastructure is visibly incomplete. If you are preparing for the AIGP exam, this is the part of the AI Act you need to know cold.

What the AIGP Exam Tests on GPAI Model Obligations

The IAPP’s Body of Knowledge for the AIGP certification (the document that defines every topic the exam can test; available on iapp.org) places general-purpose AI squarely inside Domain II.C: “Understand the distinct requirements for general-purpose AI models.” Domain II carries between 19 and 23 questions, and section II.C alone accounts for 6 to 8 of those. The performance indicators also require you to understand the enforcement framework and the differences between providers, deployers, importers and distributors.

Candidates regularly confuse three things: what applies to all GPAI providers, what applies only to providers of models with systemic risk and where the Code of Practice fits. The exam tests all three, often in a single scenario question.

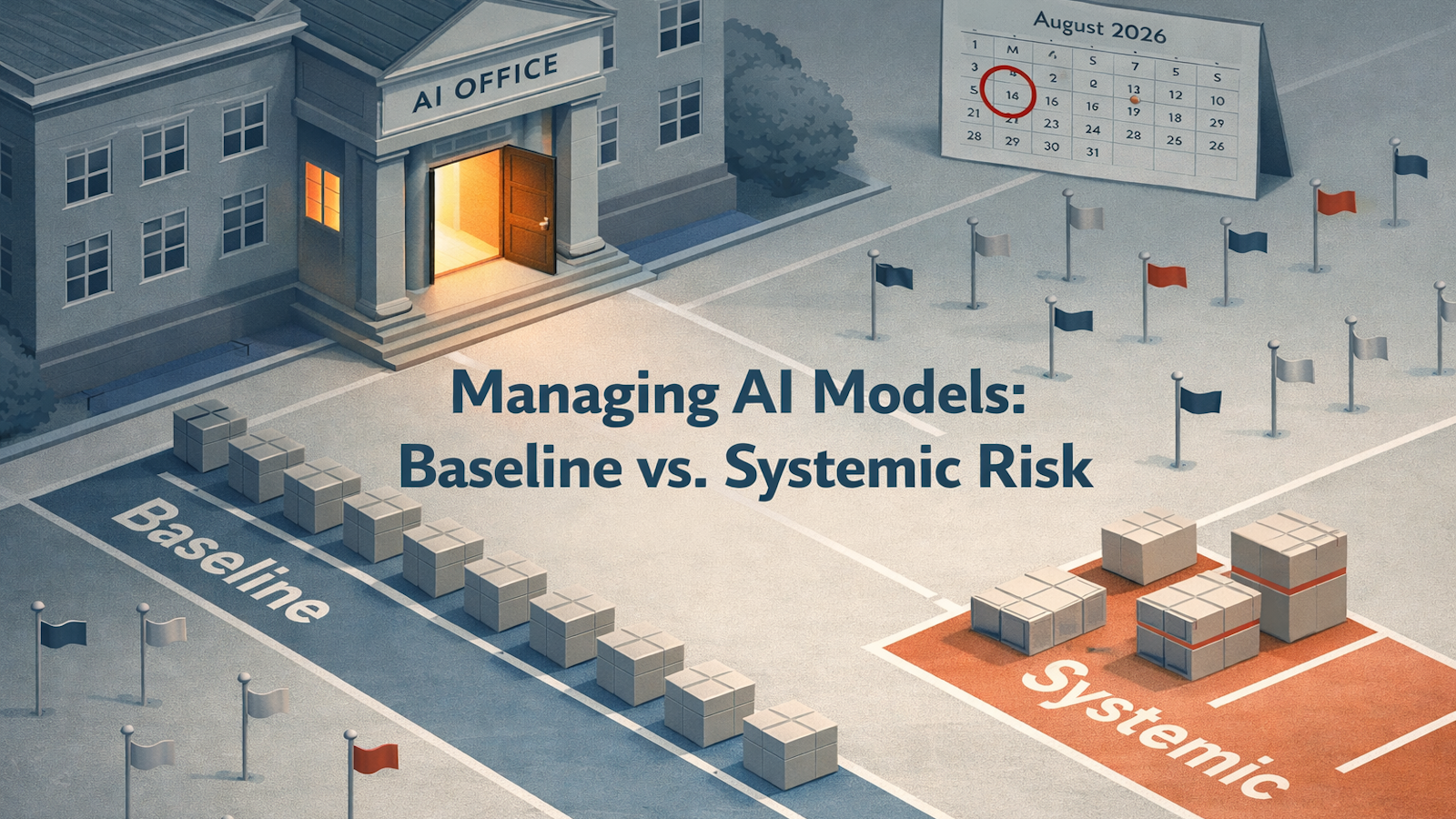

The Two-Tier Structure of GPAI Model Obligations

The AI Act creates two layers of obligation for GPAI model providers. The first applies to every provider that places a GPAI model on the EU market. These baseline requirements became applicable on 2 August 2025 and include technical documentation, a copyright compliance policy and a detailed summary of training data content.

The second layer applies only to providers of GPAI models classified as presenting systemic risk. A model is presumed to carry systemic risk if the cumulative computation used for training exceeds 10²⁵ floating-point operations, or if the European Commission designates it as such. Providers of these models must also conduct model evaluations, implement risk management policies, maintain cybersecurity protections and report serious incidents to the AI Office.

The exam will present a scenario and expect you to identify which tier applies. If you have only memorised the systemic risk obligations, you will miss a question about the baseline tier. If you treat the Code of Practice as a legal obligation rather than a voluntary compliance tool, you will get that wrong too.

The Code of Practice: Voluntary but Exam-Relevant

The GPAI Code of Practice was published by the European Commission in mid-2025. It offers practical compliance measures covering transparency, copyright and safety. The Code is not legally binding. It does not give providers a presumption of conformity with the AI Act. But it is the closest thing to a compliance roadmap the Commission has issued for GPAI model obligations, and the exam expects you to understand its status.

The distinction matters because scenario questions may describe an organisation that follows the Code and ask whether it has therefore met its legal obligations. The answer is no; the Code demonstrates good faith but does not replace the binding requirements.

Enforcement: The AI Office and the National Gap

GPAI rules are enforced at EU level by the AI Office, not by national authorities. This is different from the enforcement model for high-risk AI systems, which sits with national market surveillance authorities. The exam tests this distinction under the enforcement framework performance indicator in Domain II.C.

The practical picture reinforces the point. National implementation remains uneven; fewer than a third of Member States have communicated their designated authorities to the Commission. Enforcement powers specific to GPAI providers, including the ability to impose fines, do not apply until August 2026. Until that date, the AI Office lacks the full toolkit to penalise non-compliance.

Provider vs Deployer: The Confusion That Costs Marks

The AI Act draws a clear line between a provider (the entity that develops or places a GPAI model on the market) and a deployer (the entity that integrates or uses an AI system based on that model). GPAI model obligations fall on the provider. But candidates frequently misapply provider obligations to deployers, or assume that a company integrating a third-party model inherits the provider’s documentation duties.

If an exam scenario describes a company using a third-party GPAI model in its customer service platform, the GPAI model obligations sit with the model provider. The deploying company has separate obligations under the high-risk AI system rules (if applicable), but those are a different set of requirements entirely.

How to Study GPAI Model Obligations for the AIGP

Read Articles 51 to 56 of the AI Act alongside the GPAI Code of Practice. Build a two-column comparison: baseline obligations on one side, systemic risk additions on the other. Note the 10²⁵ FLOPs threshold and the Commission’s power to designate additional models.

Then study the enforcement structure. The AI Office enforces GPAI rules centrally; national authorities handle high-risk AI systems. The scientific panel of independent experts advises the AI Office and can issue alerts on systemic risk. These are distinct roles that exam questions separate.

Finally, practise scenario questions that test the provider/deployer boundary. If a question asks about documentation and training data summaries, that points to provider-side GPAI model obligations. If it asks about conformity assessment and post-market monitoring, that points to deployer-side high-risk AI obligations.

Free AIGP study resources mapped to the Body of Knowledge are available at 22academy.com/study.