4 Critical AI Act Enforcement Articles

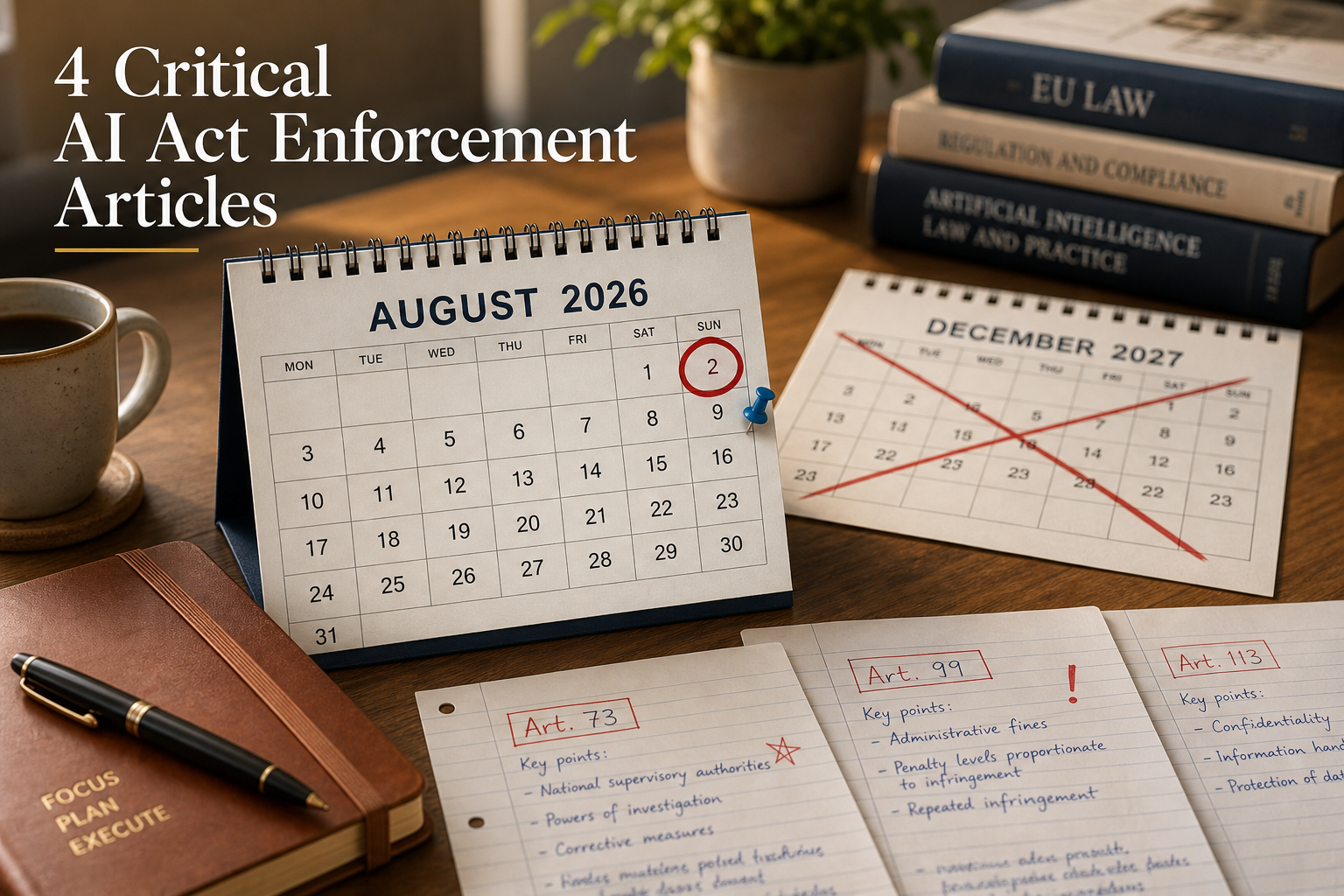

The AI Act Omnibus trilogue collapsed in Brussels on 28 April 2026, and the date you need on your AIGP study plan is 2 August 2026. Not 2 December 2027. Not whatever a follow-up trilogue eventually produces. The original AI Act enforcement deadline applies as written, and that is what the IAPP's exam writers will assume on AI Act enforcement questions.

This matters for anyone sitting the AIGP in the next twelve weeks. If you have been studying around an assumed delay, reset.

The trilogue stalled. Your study plan did not.

The European Parliament, Council and Commission spent roughly twelve hours negotiating before the file broke down over conformity assessment for AI in regulated products. A follow-up trilogue is scheduled for around 13 May 2026, but the IAPP confirmed the operational implication directly: status quo, with high-risk obligations applying from 2 August. DLA Piper put the same point to its employment-law clients in plain terms.

For exam purposes the lesson is older and simpler. The Body of Knowledge (the IAPP's published list of every topic the exam can test, organised into four AIGP domains) tests the law that is in force on the day you sit the exam. A proposal in trilogue is not a law. A press release about a forthcoming postponement is not a law. Regulation (EU) 2024/1689 as published in the Official Journal is the law, and Domain II.C.5 expects you to know it.

Four AI Act enforcement articles to memorise

Four articles do most of the work on AI Act enforcement questions. Memorise the numbers and what each one does.

Article 73 and Article 99

Article 73 is the serious-incident reporting duty for providers of high-risk AI systems. Reports go to the market surveillance authority of the Member State where the incident occurred, with timelines tightening for incidents involving critical infrastructure or fundamental rights. Article 99 is the administrative fine schedule. Three tiers apply: up to €35 million or 7% of global turnover for prohibited practices; up to €15 million or 3% for breaches of high-risk obligations and other operator duties; up to €7.5 million or 1% for supplying incorrect information to authorities.

Article 100 and Article 113

Article 100 is the parallel fine regime for Union institutions, bodies and agencies; the EDPS is the supervisor and the cap sits at €1.5 million. Article 113 is entry into force and applicability. The dates the exam will test are 2 February 2025 (Chapters I and II, including prohibited practices), 2 August 2025 (notified bodies, governance, GPAI rules, penalties for non-GPAI providers), 2 August 2026 (the rest of the high-risk regime, which is the date that has dominated the Omnibus debate) and 2 August 2027 (high-risk systems integrated into products under Annex I).

The AI Act enforcement authorities

Articles tell you what the law says; an AI Act enforcement question often turns on who applies it. Five players matter on the exam.

AI Office, NCAs and market surveillance

The AI Office sits inside the European Commission and supervises providers of general-purpose AI models centrally; this is where penalty proceedings against GPAI providers run, separate from the Member State track. Each Member State designates one or more national competent authorities and one or more market surveillance authorities under Article 70. Market surveillance authorities receive Article 73 incident reports and conduct on-the-ground enforcement; NCAs handle notified body designation and policy coordination.

Where the EDPB and national DPAs fit

When AI processing involves personal data, the GDPR overlay sits alongside the AI Act. National DPAs retain their full GDPR enforcement competence; the EDPB issues guidance and resolves cross-border consistency questions. An Article 22 GDPR automated decision-making question can attract a parallel investigation under Article 99 of the AI Act. Two regulators, two fine ladders, one set of facts.

Three exam-style scenarios

A useful test of whether you have absorbed the structure: read each scenario and identify the article and the authority before reading the answer.

A retail bank deploys a high-risk AI recruitment tool on 5 August 2026 and has not registered it in the Article 49 database. Which article and which authority? Article 99(4), market surveillance authority of the Member State of establishment, fine ladder up to €15 million or 3% of turnover.

A frontier-model provider with capabilities above the systemic-risk threshold ships an EU-facing product without the transparency disclosures required for GPAI. Which article and which authority? Article 101 fines (the GPAI-specific schedule), AI Office central enforcement.

A high-risk AI provider experiences a serious incident affecting hospital patients in three Member States and does not file a report. Article 73 obligation breached; market surveillance authorities of all three Member States with concurrent competence; Article 99 administrative fine ladder available.

Reset your AI Act enforcement timeline

The cleanest exam-preparation move this week is to take five minutes and update your study plan: cross out 2 December 2027, write 2 August 2026, and treat the four articles above as memory anchors. The substantive reasoning the AIGP exam tests on AI Act enforcement is unchanged; only the temptation to plan against an extension was real, and that temptation is now visibly broken.

Have a look at 22academy.com/study and pick the AIGP revision step that matches your remaining weeks.